The Mirror That Breaks Things: Notes from ProductCon London 2026 | #97

My notes from ProductCon London 2026 - eleven talks, one uncomfortable message: AI doesn't create your problems. It just makes them impossible to look away from.

I was sitting in a session at the Barbican, watching Ashley Nutter (SVP Product, CNN) draw a diagram.

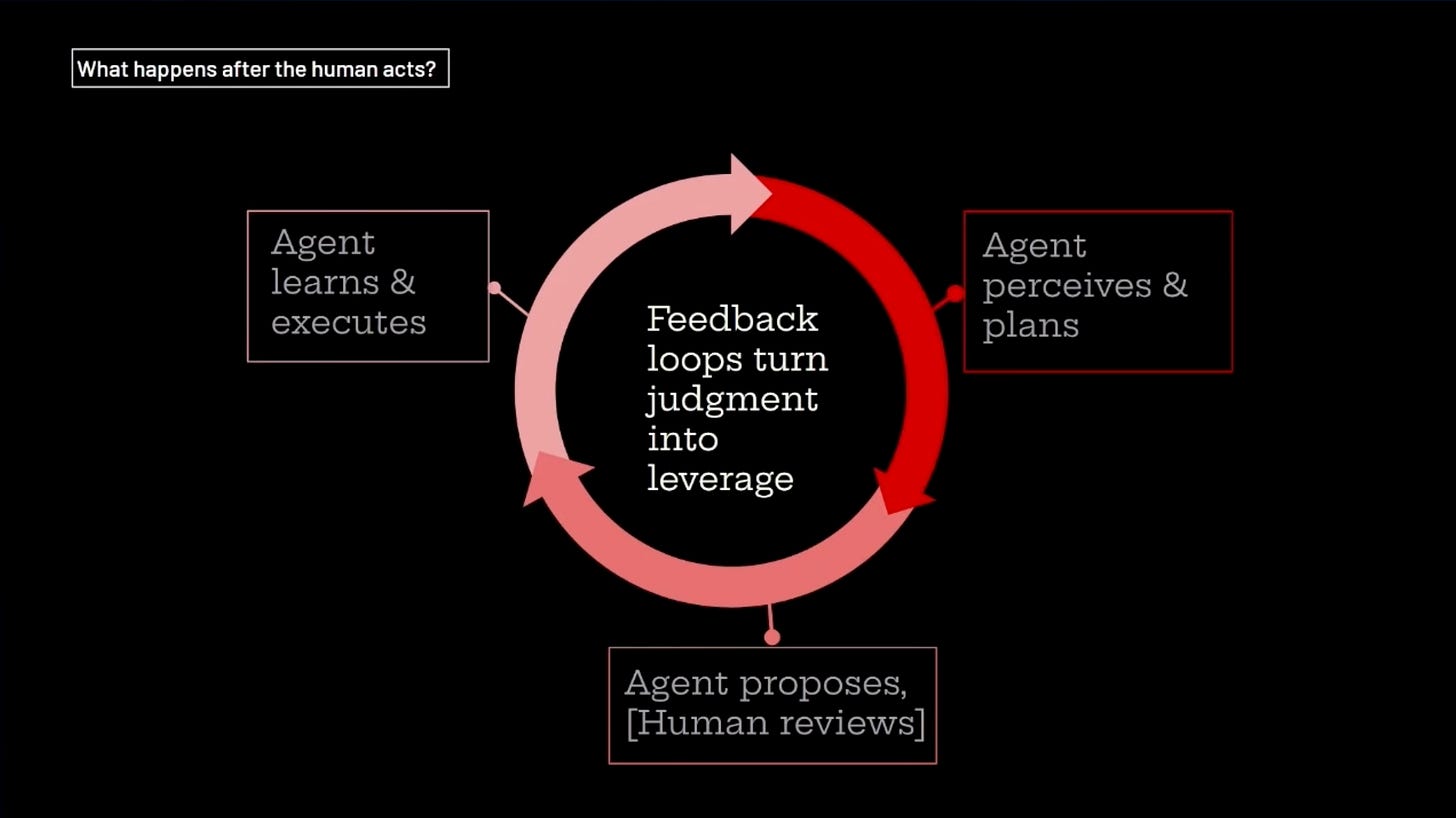

It was a loop. Agent perceives and plans. Agent proposes. Human reviews. Agent learns and executes. In the centre of the loop, she’d written six words:

“Feedback loops turn judgment into leverage.”

She could have opened her talk with it. She ended with it instead - because the twenty minutes before it were the argument for why it’s true.

I wrote it down. I took a photo.

And then I sat with the slightly uncomfortable realisation that most of the human-in-the-loop processes I’ve been involved with over the years weren’t feedback loops at all. They were gates - checkpoints that consumed a human decision and moved on, without that decision ever teaching the system anything.

That thought stayed with me through the rest of the day. By the close of the afternoon, Jessica Hall (CPO, Just Eat Takeaway) had given it its sharpest name.

The through-line at ProductCon London earlier this week wasn’t “use AI” or “move faster.” It was something harder to sit with:

AI doesn’t create your problems. It just puts them in high definition.

(A quick note before we get into it: I was trying to listen and capture quotes at the same time. My thumbs can’t keep up. Some of the longer quotes below are close paraphrases rather than verbatim. Anything in quotation marks is either exact or very close to it. The substance is intact.)

The Debt You’re Pretending Isn’t Debt

Nilan Peiris (CPO, Wise) opened the morning connecting directly to the event’s headline: Build something that doesn’t exist. [Recording Link]

His argument was a quiet one, but it had teeth.

“The companies that consistently score in the top 90% of NPS create something that never existed.”

The implication: you can’t build something that doesn’t exist if you’re buried under something that shouldn’t.

Technical debt isn’t a backlog problem. It’s a capital allocation decision - and most teams are making it implicitly, by avoiding the conversation, rather than explicitly, by having it.

If you’re not pricing debt into your roadmap conversations the same way you price features, you’re not managing it. You’re deferring it. And the cost compounds.

Side note: This connects to something I wrote about in #74 - that debt has to be priced as a capital decision, not managed as a backlog problem. I still don’t have a clean answer to this for my own team. I’m working on it.

Carlos González de Villaumbrosia (CEO, Product School) followed with a frame that coloured everything else in the room. [Recording Link]

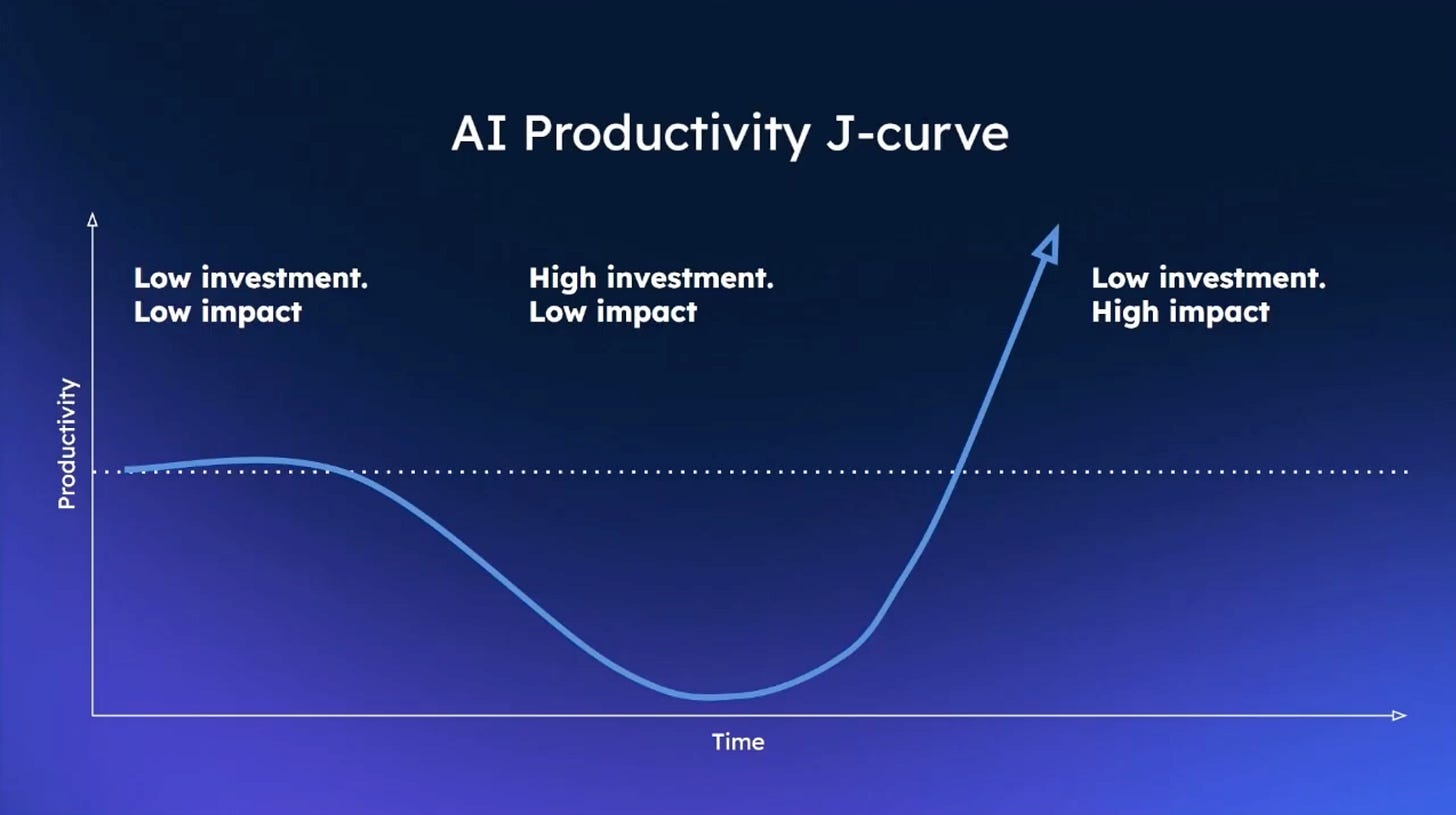

The AI productivity J-curve: low investment and low impact, then high investment and still low impact - the trough - then finally low investment and high impact. Most product organisations are sitting somewhere in that middle section right now. The investment has gone in, the context work is slow and unglamorous, and the returns haven’t arrived yet.

That was the emotional backdrop for the whole day.

The Loop Nobody’s Closing

Ashley Nutter’s talk was the most structurally tight of the morning. [Recording Link]

Her whole argument was essentially one sentence: human review only has value if it feeds back and makes the agent better. Judgment without feedback is just a gate.

“If human-in-the-loop is employed due to lack of confidence in the agent, people will introduce workarounds that negate the value from the agent.”

Read that again. If the human is there because you don’t trust the agent, that distrust will create workarounds that cancel out the agent’s value anyway. You’ve spent the engineering time. You’ve shipped the workflow. And the humans have quietly found ways around it, because the uncertainty was real and nobody addressed it.

Her two filters for when judgment actually has an outsized impact:

Consequences are irreversible or reputationally costly

The human’s judgment improves the system long-term - it feeds back, it trains it

Every other place: get out of the loop.

Her spectrum slide - binary feedback on one end, open-ended review on the other - landed a practical insight I hadn’t fully articulated before.

Binary review is a weak signal for an agent - it’s a simple yes/no, approve/decline, with no explanation attached. The agent knows what you decided, but not why. But open-ended review at scale is expensive, slow, and requires accessing user input that isn’t always available.

The hybrid she implied but didn’t name: binary by default, open-ended on declines only. You get the “why” where it matters most - when something is declined - without the cost of open-ended review on everything.

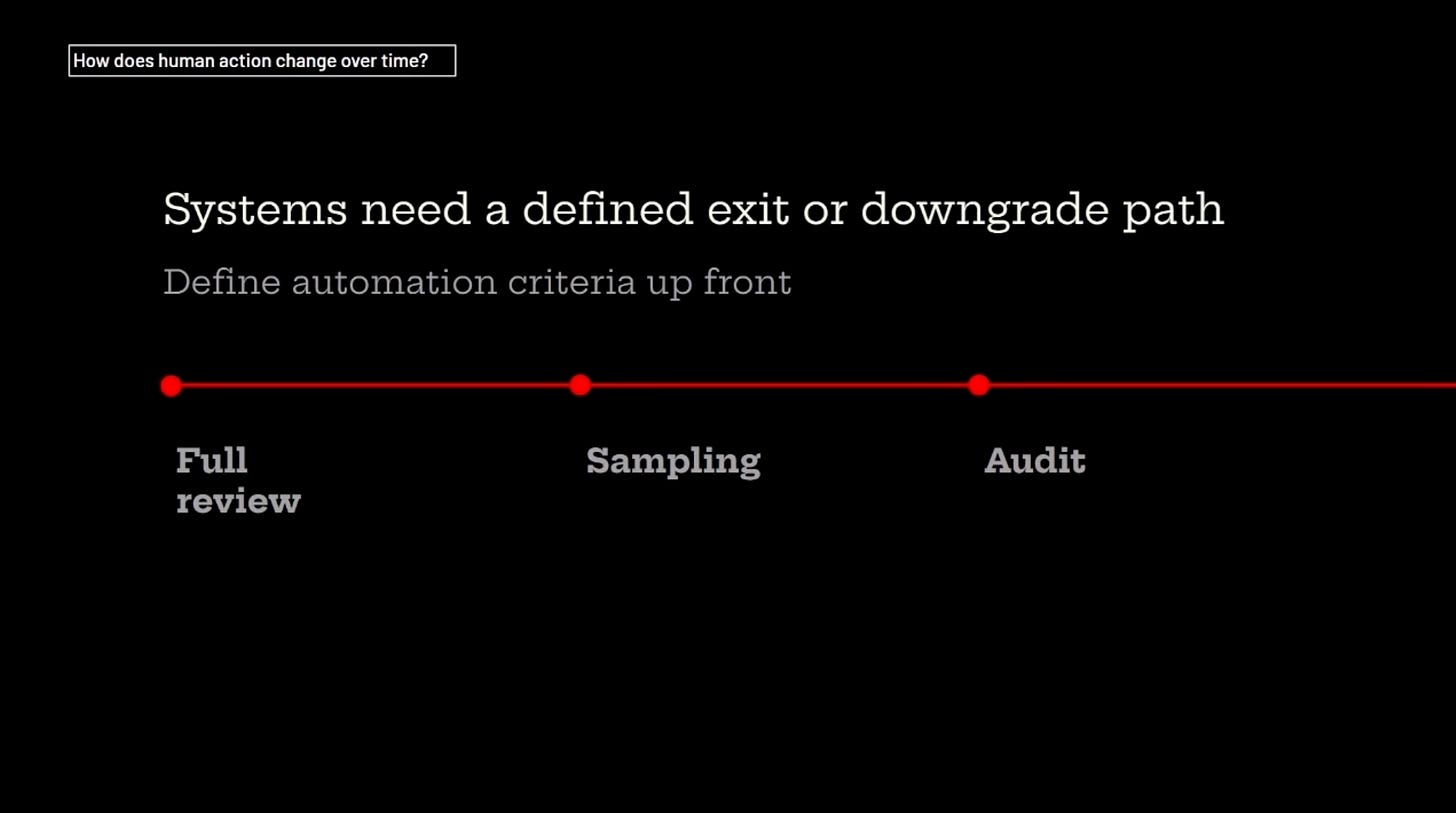

There was one more slide I photographed but didn’t manage to capture in my notes. The question at the top: “How does human action change over time?” Her answer: systems need a defined exit or downgrade path, with automation criteria set up front. Full review → Sampling → Audit.

You don’t stay at full review forever. You plan for how the human steps back as confidence builds. That progression felt like the practical missing piece of most human-in-the-loop implementations I’ve seen.

FYI - I was trialing my content pipeline from #96 on my phone to capture notes from the day. It worked quite well, and I have it set up to throw me reflection questions at the end of each talk - the one for this one being: where is a human acting as a bottleneck rather than a quality gate in your own workflows? My answer: “I am my own bottleneck.” I saved it as a note and I thought about it for the rest of the day.

Outcomes, Not Outputs

Kerry Small (COO, BT Group) runs a 180-year-old company. She doesn’t have the luxury of treating transformation as optional. [Recording Link]

“In the era of AI, it’s about outcomes, not how we ship those products and features.”

Her three levers for making that real rather than aspirational:

Performance metrics - measuring against outcomes, not outputs

Hiring for AI-native employees - changing what you look for in new hires

Coaching culture - bringing in people who can actually be coached, and managers who know how to do it

When Carlos pressed her on how you prioritise which bets to back, she had three filters:

Minimum 20% growth potential

Keep the portfolio tight - too many fronts means accumulating debt across all of them

Where there’s regulatory or legal risk, build it as a platform from the start, not a one-off

The two questions she gave for evaluating agentic bets specifically were the most practical takeaway of the session:

Will it solve a product problem or an internal problem? Can you attach a KPI to it?

If you can’t answer both, keep moving.

Pavel Fabrikantov (SVP Product, Semrush) made the mirror argument from a direction I hadn’t considered. [Recording Link]

“AI systems are now making pre-selection decisions about your product before a human ever sees it.“

Not occasionally. Routinely.

80% of global B2B tech buyers are using AI as much as traditional search when researching vendors. Something I found interesting - according to Pavel, the model that’s deciding whether to recommend you is likely 4o mini - because it’s the cheapest, not the smartest.

“This traffic does not show up on a dashboard.”

That line sat with me. You can’t see what you’re losing. There’s no referral source. No drop-off metric. The customer who never arrived because an AI system didn’t mention you - that gap is invisible in your current analytics.

His practical takeaway was the most actionable of the morning: audit per model, not per “AI” as a single channel. 50 queries, 4 platforms. Track whether you’re mentioned, whether it’s accurate, and who wins when you’re not. That’s the new competitive landscape - and most product teams haven’t looked at it yet.

The closing slide said it plainly: design for AI discovery, or cede that ground to competitors who do.

Liz Goulding (CPO, The Economist) followed with a talk on AI audio trust that deserves its own post - her live demo showing how hard it already is to distinguish human from AI narration was one of the more memorable moments of the morning. I didn’t capture it well enough to do it justice here, but the crowd engagement aspects really were powerful. Worth a watch here: [Recording Link]

After Lunch

Jim Kennedy (Global Product Lead, Jaguar Land Rover) framed the afternoon in one sentence:

“AI doesn’t on its own create value - it amplifies the systems it is added to.”

His carousel example made the same point in practice. Behavioural analytics at JLR showed users consistently ignoring their designed homepage experience and scrolling directly to one specific item every time - a clear signal of intent that the original design hadn’t accounted for. The shift that followed was from page-level thinking to journey-level thinking, and the AI didn’t surface the problem so much as make a signal that was already there impossible to keep ignoring. [Recording Link]

Nikita Miller (CPO, Perk) reframed the speed-versus-quality argument that tends to generate the most heat in product circles.

“Speed to learning is most important.”

That’s a different claim to just moving fast. It means you don’t need the full AI strategy mapped out before you start - you run the experiment, you learn faster than the people still waiting to feel certain, and quality becomes a consequence of better information rather than a trade-off against velocity. [Recording Link]

Speed Is the Wrong Question

Brent Barkman (VP Product, Miro) gave the most useful reframe of the afternoon. [Recording Link]

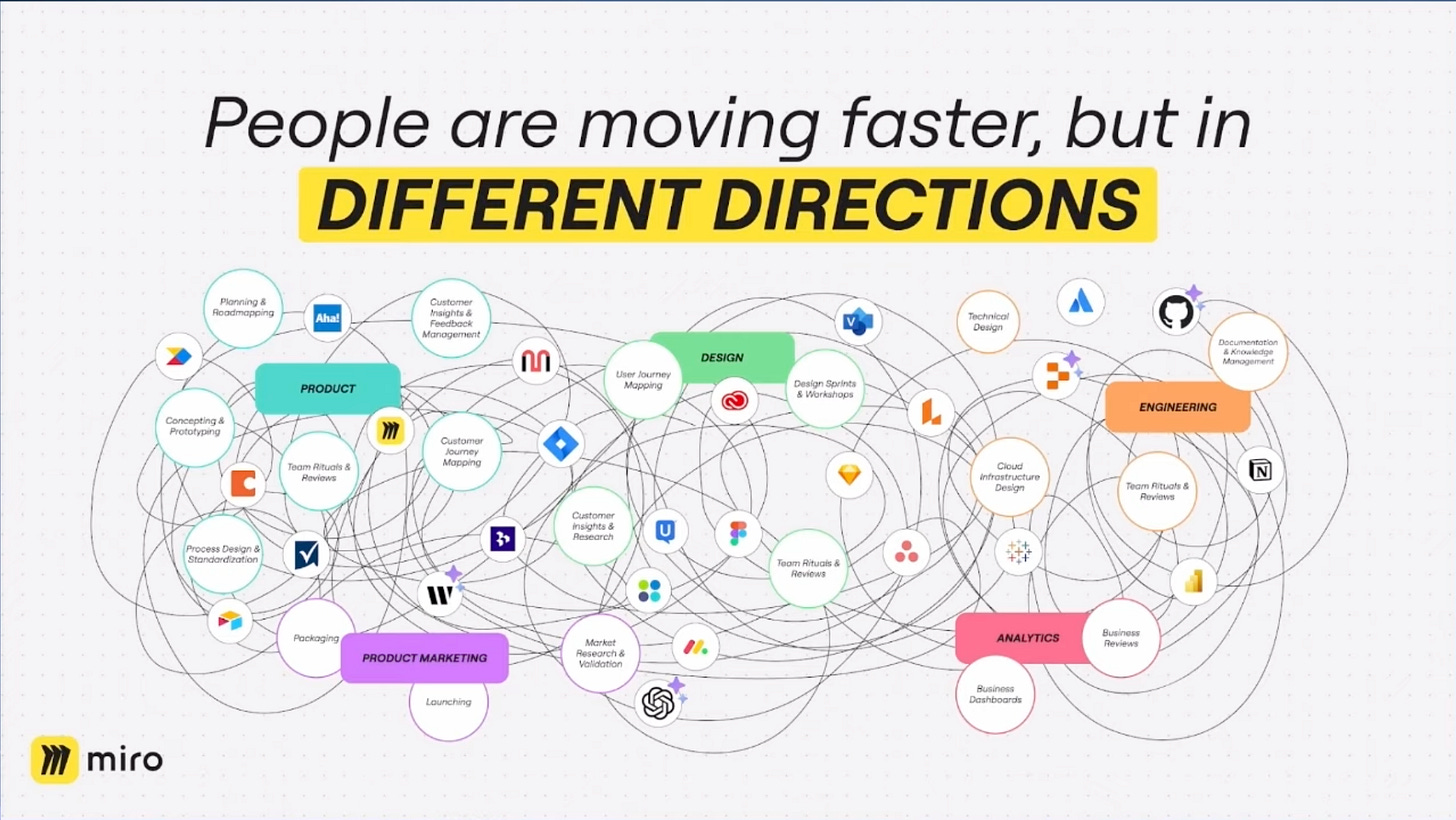

He opened with a slide that made the alignment problem visual rather than theoretical. A chaotic web of circles - Product, Design, Engineering, Analytics, Product Marketing - all tangled together with overlapping tools and workflows. The headline: “People are moving faster, but in DIFFERENT DIRECTIONS.”

“Alignment is the forever problem.”

The Before/After he showed was striking - Miro’s internal design process going from a 1-2 week roadmap-to-brainstorm-to-review cycle down to a 1-2 hour roadmap-to-co-creation meeting.

But the more useful insight wasn’t the speed number.

AI doesn’t just make you faster at the same process. It lets you explore more of the solution space in less time.

His slide made this point visually: old process, explore part of the solution space over months, refine one idea, low probability of building the right thing.

New process, explore the entire solution space over weeks, find the best option first, then refine.

The speed is a side effect. The quality improvement is the point.

If you’ve read my last blog (#96), you’ll recognise the same idea in a different context - collapsing the loop between idea and iteration changes what you build, not just how fast you build it.

His honest retrospective was the thing I appreciated most.

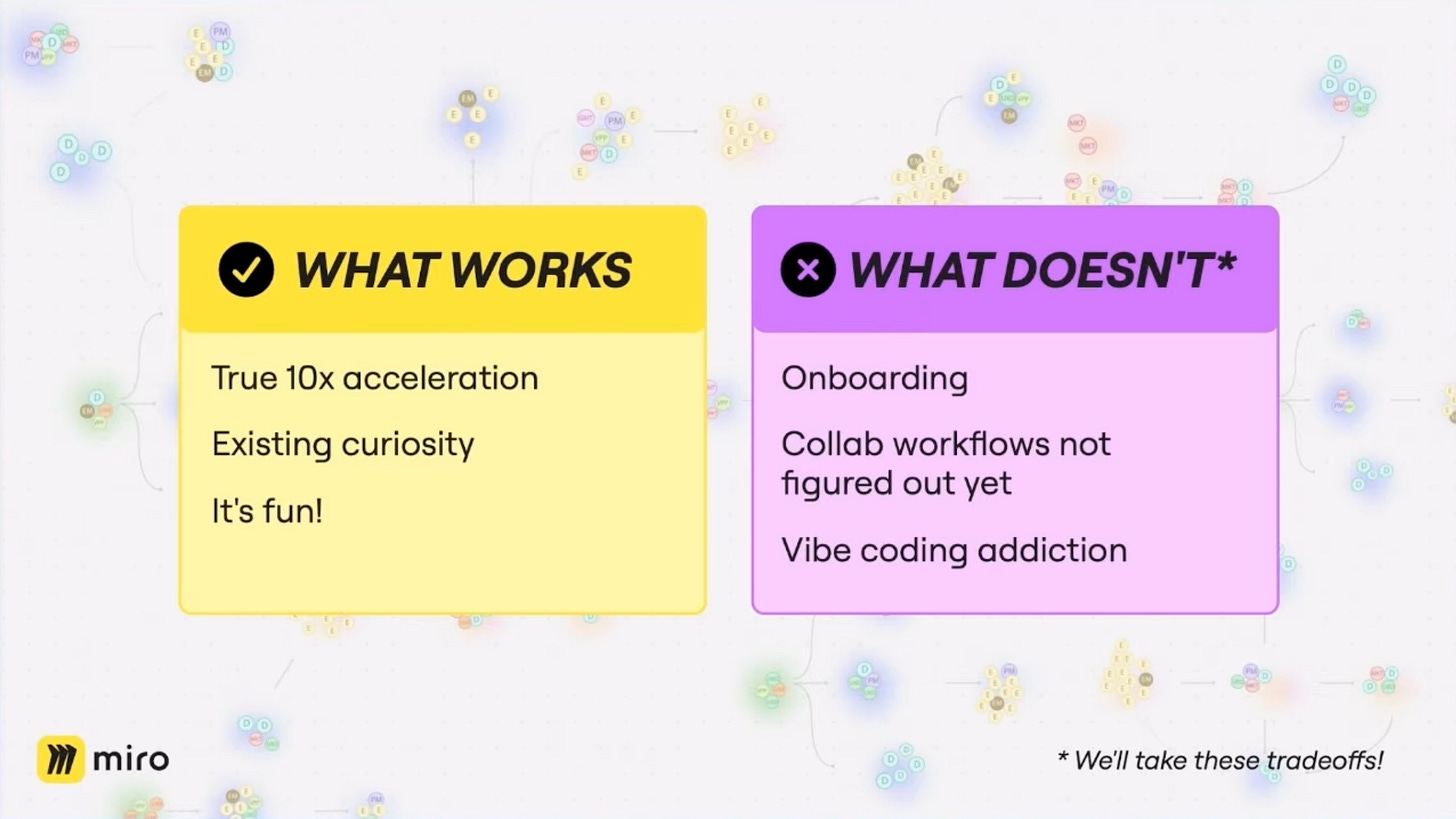

What works: true 10x acceleration, existing curiosity, it’s fun.

What doesn’t: onboarding, collab workflows not figured out yet, vibe coding addiction.

“We’ll take these tradeoffs.”

One cultural detail worth preserving as part of this talk: Miro use the internal hashtag #badversion for unrefined AI output. A shared signal that something is a starting point, not a finished artefact. It lowers the pressure to present polished work, and makes it safe to share early.

That’s not a tool choice. That’s a cultural choice that enables the tool to work.

This landed for me personally.

It’s something I’ve been wrestling with - when you’re using AI heavily, you can scan a document in seconds and know if it’s accurate. But you haven’t had time to refine it into your own voice yet, and neither has anyone else.

So when you share it, trust takes a hit before the content even gets a chance.

What you actually need is a shared signal:

yes, this is accurate, but it hasn’t been refined yet - or part three is good, ignore the rest.

That’s what #badversion is. A Ts and Cs for AI output.

It reminded me of something Dharmesh Shah wrote about - surfacing his suggestions with a clear label so the reader knows how Dharmesh feels about the suggestion before they decide how much weight to give it. The framing changes everything.

Brent’s closing three things for product leaders:

Shift to an abundance mindset. Spend the tokens.

Be a builder who collaborates with agents as teammates.

Iterate on your ways of working until they work for your people and org.

The third one is the one most teams won’t do.

And then, almost as an aside, he mentioned Miro’s MCP.

This got me very excited.

The Miro Detour

I’ve been watching board-level agent interaction tools for the better part of a year. Not screenshot wrappers. Structural interaction - agents that can read, write, and reason about a board’s contents directly.

If you’ve seen any of my Miro work, you’ll know I’m a genuine fan. My whole discovery framework lived in Miro - user journeys, discovery boards, all of it. I even published a continuous discovery board template so others could download and use it.

And then Claude Code arrived.

Almost overnight, my pace with Claude outstripped what I could maintain in Miro. Getting content back out of Claude and into a board became the bottleneck - everything else had sped up, but the workshopping hadn’t. My user journeys got more detailed, more nuanced, richer in content. They also ended up in GitHub, which is not exactly a great place to share them with a team. All my Miro diagrams quietly fell out of date. The tool I’d relied on the most for discovery became the one that could no longer keep up with me.

For the last year, basically, I’ve been asking: where is Miro’s MCP?

I told myself they must be building a good one and just hadn’t shipped it yet. I hoped that was true.

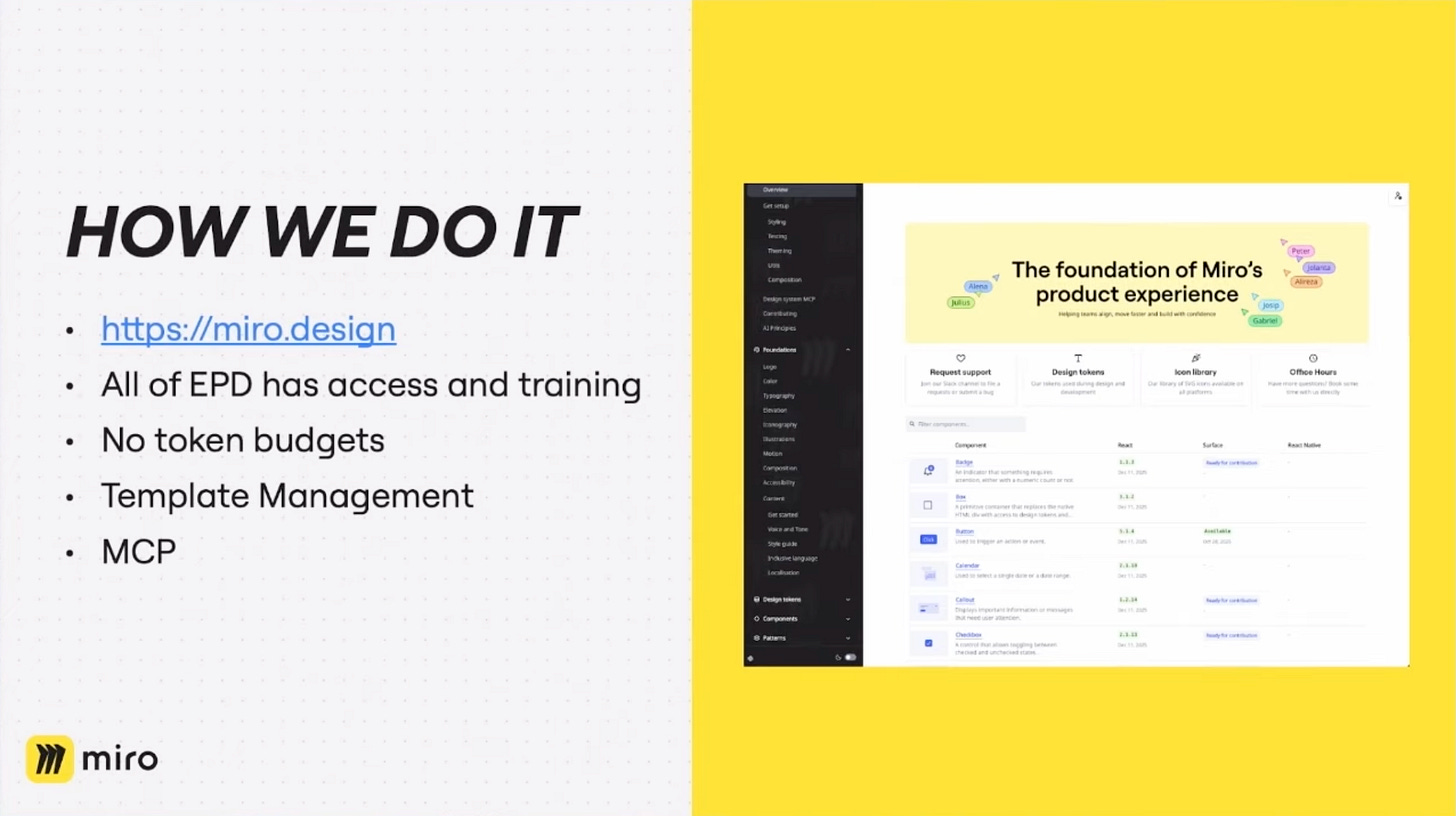

The HOW WE DO IT slide listed it almost casually

I stared at that slide for a moment. MCP? What?

When did this happen? How did I not know about this?

When 14:15 rolled around I stood up, convinced it was the coffee break. It wasn’t. I made a beeline for the Miro stand and spent the next hour in a hands-on demo with one of the leads on the Miro MCP project. Watching an agent read a board, create objects, and manipulate the content and structure of a board directly (with very nice UX) feels different from watching it summarise a screenshot. It looks like they have built it to feel less like automation and more like collaboration - which is a good summary of their company motto in general.

I haven’t had access yet - expecting it in the next day or two. But what I saw looked genuinely powerful, and - importantly - it looks like it fits directly into my agent ecosystem rather than funnelling everything through Miro’s own internal AI assistant.

There are protections against accidental changes, the scope is broad, and the direction they’ve gone with it is exactly what I was hoping for. I’m fairly confident there are multiple blog posts in this.

Simon Kubica’s (CEO, Alloy) vibe coding workshop for non-technical PMs had been running since 14:15 - the slot I’d just walked out of.

Simon, if you’re reading this: I owe you a recording view (everyone else you can see it here - along with the rest of the talks). The entire theme of this post is product people getting out of their own way. I was at the Miro stand. I have no defence.

(Miro MCP links for anyone who wants to take a look: Docs - GitHub)

When I wandered back into the auditorium, the panel was starting. Debbie McMahon (VP Product, Loveholidays - who was also the MC for the day) moderating Andrew Taylor (VP Product, Contentsquare), Karel Callens (CEO, Luzmo), Maya Toutountzi (Head of Product, XTM International), and Bobby Gill (Head of Product AI, Tesco) on bringing AI capabilities to non-AI-native products. My head was still half inside a Miro board so I didn’t capture anything from this talk either - sorry! You can listen to it here.

CPOs Are the New GMs

Maria Parpou (CPO, Mastercard Merchant Solutions) gave the sharpest provocation of the afternoon. [Recording Link]

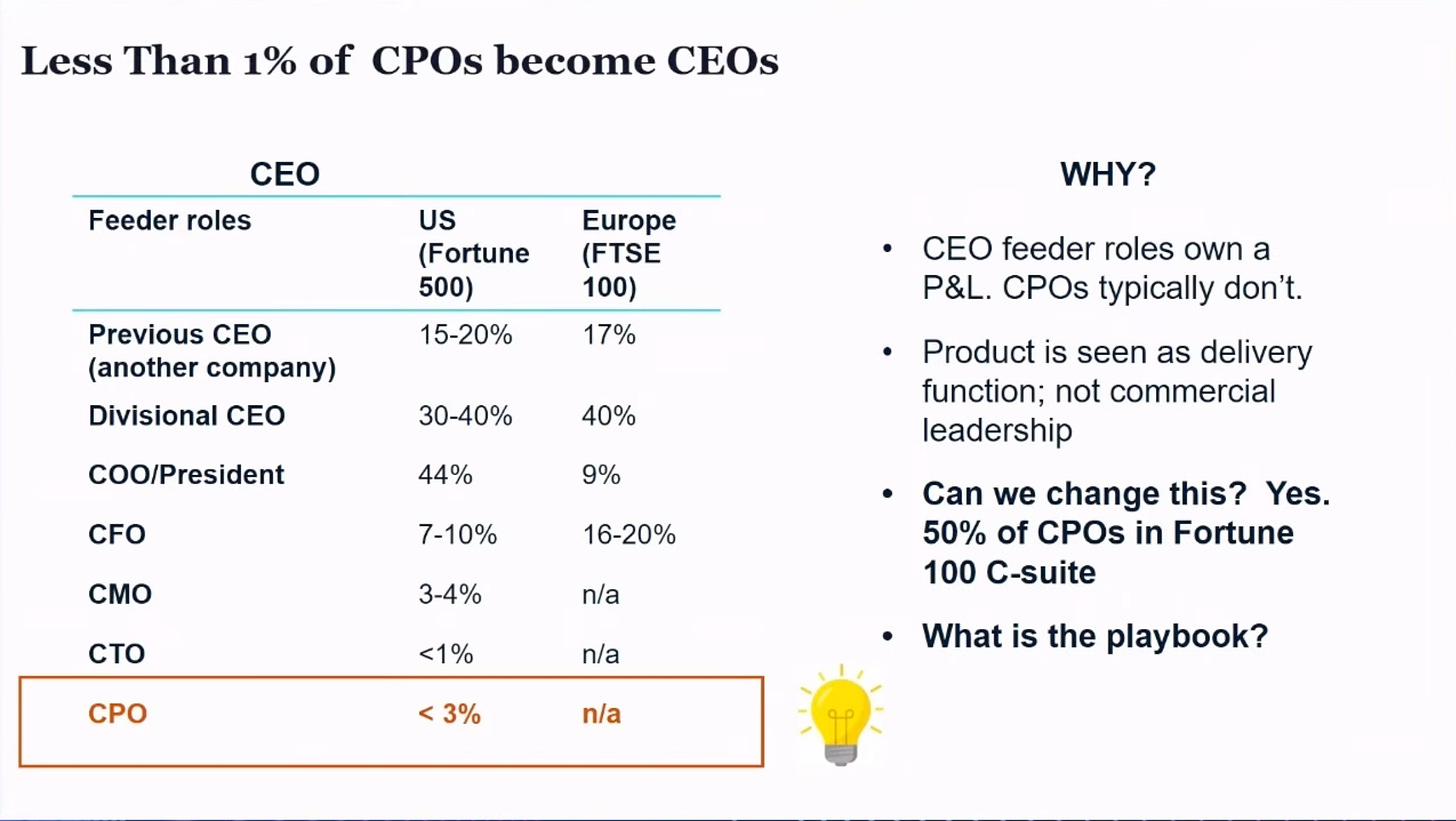

She opened with a slide that got the audience uncomfortable very quickly. Less than 3% of Fortune 500 CPOs become CEOs. The table made the reason plain: CEO feeder roles own a P&L. CPOs typically don’t. Product is seen as a delivery function, not commercial leadership.

“If you don’t own the numbers, you don’t own the strategy. You’re just making suggestions.”

Her playbook had three parts. First: own the P&L. Not nominally - actually. Know your unit economics, your marginal costs, how your pricing is engineered. If you’re not in that conversation, you’re not in the strategy conversation.

Second: design for distribution. Channel strategy is product strategy. The distribution channel is often more important than the product itself - a point she attributed to Drucker and applied directly to the emerging reality of agentic commerce.

You can’t design a product and then force it into the sales channel as an afterthought.

Third: escape the duality trap. Stop treating Product Development and Product Proposition as two separate tracks. The “all-round product manager” owns the narrative, not just the roadmap.

“What are the tasks I am doing that are not going to help me be a great advisor to the CEO - and automate that away. So you can start thinking not about roadmaps, but about gross outcomes and real impacts.”

That last line is a mirror too, in its own way.

The Mirror

Jessica Hall (CPO, Just Eat Takeaway) closed the day. [Recording Link]

Her talk had the same structure as the day itself - it built to the thing it was always going to say.

The best concept of the day came from her: Human Glue.

The people in your organisation who exist to hold together a workflow that should not require them. The unwritten rule. The manual step. The person who “just knows” how to make it work.

“Simplify then automate was the way we used to think about automation of messy processes. Now if you automate a mess with AI, you just end up with an expensive AI mess.”

Her audit is simple: find the person on your team who is required to enable a workflow that should be self-serve. That’s not a resource problem. That’s a signal. The human is there because the process is broken, and the process is broken because no-one has been forced to fix it yet.

“Which ‘unwritten rule’ is currently acting as a tax on your team’s ability to scale at the speed of AI?”

She had a slide I hadn’t seen coming. “Creators to Curators.” Stop worshipping at the altar of the ‘feature.’ Apply your mirror to your actual problems. Don’t build the logic - curate the model that generates it. The shift from weeks to hours is on the other side of that mindset change, not on the other side of a tool decision.

JET holds 104 petabytes of data. 570 million events a day. At that scale, the old answer to a broken process was to throw headcount at it. That answer stopped working a while ago. The question she left the room with:

“Is your data foundation designed to provide answers, or is it just a pile of raw material that requires a plumber to navigate?”

And then, the closing slide. A silhouette walking toward light. Four words on a quote card that pulled the whole day together:

“AI isn’t just a tool for your product, it’s the high-definition mirror for your business. What it reveals is yours to fix.”

That was it. That was the whole day in one sentence.

The Thing Stopping You

The event opened with a slide: BUILD SOMETHING THAT DOESN’T EXIST.

Every speaker was addressing a different face of the same underlying problem.

Peiris on the debt you’re avoiding. Hall on the humans masking broken processes. Nutter on the loops that don’t teach. Kennedy on amplification as a mirror. Barkman on what leaning in structurally actually looks like. Parpou on the commercial outcomes product leaders keep handing to someone else.

The thing you’re walking away to build doesn’t exist yet.

But the thing stopping you from building it probably does. It’s just been invisible.

Until now.

Which of these talks made you uncomfortable? That’s probably the one worth acting on first.

If this landed for you, #87 (Before AI Can Help, UX Leaders Must Solve Operational Tech Debt) connects directly to Hall’s Human Glue argument.

I’d love if you subscribed — trying to build something useful for people working at the sharp end of product.